There’s a moment, usually about three days into using any AI video generator, where the initial thrill quietly shifts into something more complicated

The first output looked impressive. The second one felt like magic. By the fifth or sixth attempt, you start noticing things — a weird visual artifact, a motion that doesn’t quite land, a result that looked great as a thumbnail but falls apart at full resolution. This is where the real learning begins, and it’s the part most blog posts skip entirely.

I want to talk about that phase. Not the “look what AI can do” phase, but the one right after it — where a solo creator or small business owner has to decide whether an AI-powered visual tool is genuinely useful for their actual work, or just interesting in the abstract.

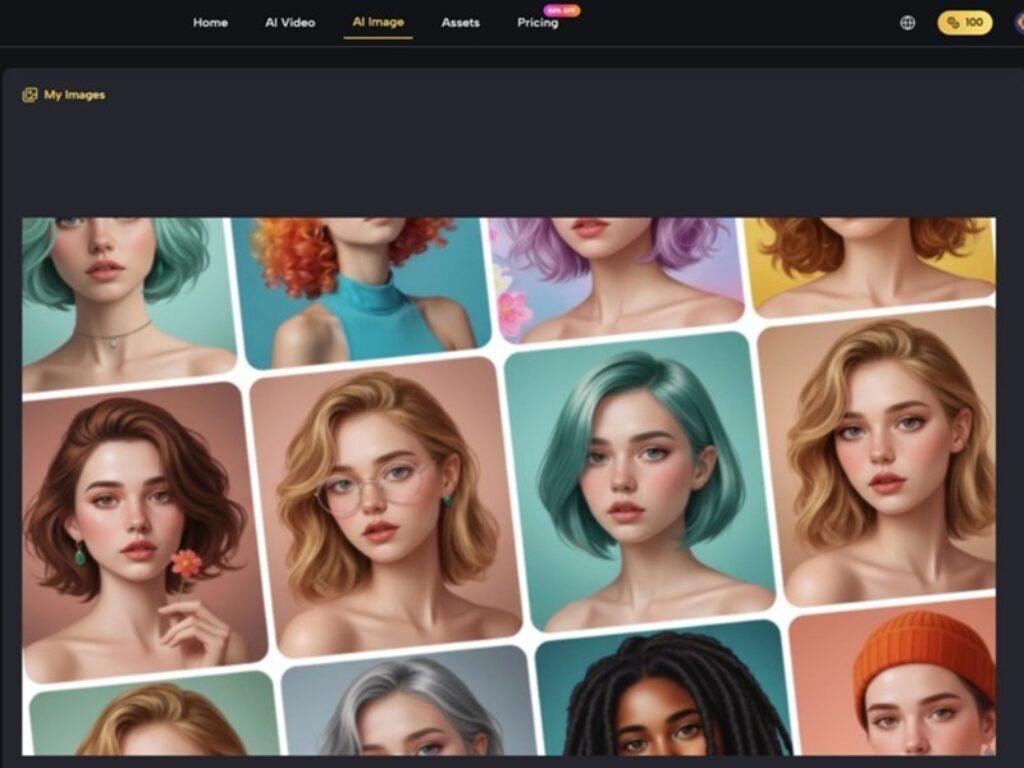

MakeShot positions itself as an all-in-one AI studio for generating professional-grade videos and images, powered by models like Veo 3, Sora 2, and Nano Banana. That’s a notable combination of engines under one roof. But what does it feel like to actually sit down with a tool like this and try to get something done?

Let’s talk about that honestly.

The Gap Between a Demo and a Workflow

Most people discover an AI video generator through a striking example — a clip someone shared on social media, a demo reel, a before-and-after post. That example was almost certainly the best output from a longer session of experimentation. What you didn’t see were the twelve attempts that preceded it. This isn’t a criticism. It’s just how prompt-based creation works right now.

What tends to happen is this: a beginner types in a fairly detailed prompt, gets back something visually interesting but slightly off from what they imagined, and then spends the next thirty minutes trying to figure out which words to change to get closer to the result they actually need. The tool is fast. The iteration loop is not — because the bottleneck isn’t generation speed, it’s the user’s ability to communicate visually through text.

With a platform like MakeShot offering access to multiple AI models, there’s an added layer of decision-making. Which model should you use for which kind of output? That’s not always obvious upfront, and the answer usually comes through trial and error rather than documentation.

What Beginners Tend to Misjudge Early On

Two things consistently surprise people in their first few weeks with AI video and image generation tools.

First: output quality is inconsistent by nature. You can run the same prompt twice and get noticeably different results. For someone used to traditional editing software — where actions produce predictable outcomes — this feels disorienting. It’s not a bug. It’s a fundamental characteristic of generative models. But it does mean that “professional-grade results,” as MakeShot describes its aim, depend partly on the user’s willingness to curate and select from multiple outputs rather than expecting a single perfect render.

Second: the time savings are real, but they show up in unexpected places. AI video generators don’t necessarily make the final product faster to finish. What they compress is the ideation and drafting stage. Instead of spending two hours setting up a rough concept in a traditional editor, you might get a usable starting point in minutes. But refining that starting point — adjusting tone, pacing, visual coherence — still requires human judgment. Often more judgment than beginners expect.

I’ve noticed that people who get the most value from these tools are the ones who stop treating them as “finished output machines” and start treating them as draft generators. That mental shift usually takes a few weeks.

Where a Multi-Model Platform Changes the Calculation

One thing worth pausing on is MakeShot’s approach of bundling several generation models — Veo 3, Sora 2, and Nano Banana — into a single platform. For someone evaluating an AI video generator, this matters for a practical reason that isn’t immediately obvious.

Different models have different strengths. Some handle motion better. Some produce more photorealistic textures. Some are faster but less detailed. When these models live on separate platforms, comparing them means managing multiple accounts, learning multiple interfaces, and mentally tracking which tool gave you which result.

Having them in one place reduces that friction. Whether MakeShot’s implementation makes switching between models intuitive — that’s something I can’t confirm from the product description alone. But the concept of a unified workspace for multi-model generation is genuinely useful for anyone doing comparative testing, which is exactly what early adoption looks like in practice.

A fair caution here: more options don’t automatically mean better outcomes. Sometimes having three models available just means three times as many outputs to evaluate. For a solo creator working on a deadline, that abundance can become its own kind of slowdown if there’s no clear framework for choosing.

The Part That Usually Takes Longer Than Expected

Let me be specific about something. The hardest part of working with any AI video generator isn’t generating the video. It’s everything that happens around it.

Deciding what you actually need. Writing a prompt that reflects that decision. Evaluating whether the output matches your intent or just looks cool. Figuring out if the result works in context — on your website, in your social feed, inside a presentation. These surrounding decisions are where most of the time goes, and no AI model, however advanced, makes those decisions for you.

People often notice after a few tries that their prompt-writing skills matter more than the tool’s capabilities. A vague prompt given to a powerful model produces a vague result. A precise prompt given to a modest model often produces something more usable. This is counterintuitive for beginners who assume the technology does the heavy lifting.

MakeShot’s promise of being an “easy” AI video generator and image creator is appealing, and the generation step itself may well be straightforward. But ease of generation and ease of getting what you need are two different things. The gap between them is where your creative judgment lives.

How to Tell If a Tool Is Worth Returning To

Here’s a question worth sitting with: after the novelty wears off, what makes someone keep using an AI visual tool?

From what I’ve observed — both in my own exploration and in watching how others describe their workflows — the answer is rarely about features. It’s about whether the tool fits into an existing rhythm of work without creating more problems than it solves.

A few honest signals that a tool like MakeShot might be worth continued use:

- You find yourself reaching for it during real projects, not just experiments.

- The outputs require less manual correction over time as your prompting improves.

- You stop comparing every result to what a human designer would produce and start evaluating it on its own terms.

- The platform doesn’t fight you when you need to move quickly.

And a few signals that it might not be the right fit:

- Every output needs heavy post-editing in another application.

- You spend more time troubleshooting prompts than you’d spend creating manually.

- The results look impressive in isolation but don’t match your brand’s visual language.

None of these signals are about the tool being “good” or “bad.” The decision is less about the tool itself and more about the specific intersection of your needs, your skill level, and your tolerance for iteration.

A Grounded Takeaway

There’s no shortage of AI video generators available right now, and MakeShot’s multi-model approach — combining Veo 3, Sora 2, and Nano Banana in one studio — is a genuinely interesting proposition for anyone who wants to explore different generation styles without juggling platforms. That consolidation has real practical value.

But the thing worth remembering is this: the tool is only one variable. Your ability to prompt clearly, evaluate critically, and integrate AI-generated visuals into a coherent workflow — those skills develop slowly, through repetition, through mild frustration, through the gradual realization that “easy” and “effective” aren’t always the same thing.

If you’re approaching MakeShot or any AI video generator for the first time, give yourself a few weeks before forming a verdict. The first day’s impressions are almost never the ones that stick.